Modern businesses generate enormous amounts of data every single day. From customer interactions and sales records to website analytics and cloud applications, organizations depend heavily on data for decision-making and business growth. However, collecting data alone is not enough. Companies also need etl process optimization to move, transform, and organize that data properly.

This is where ETL process optimization becomes extremely important.

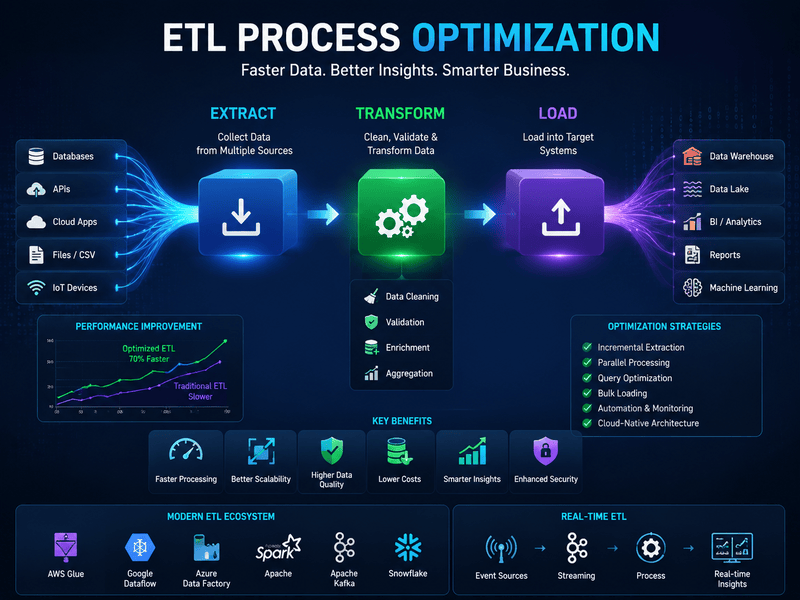

ETL stands for Extract, Transform, and Load. It is a critical data integration process used to collect information from multiple sources, convert it into a usable format, and load it into databases or data warehouses for analysis. Businesses across industries rely on ETL systems to support reporting, analytics, machine learning, business intelligence, and operational workflows.

As data volumes continue growing rapidly, organizations often face performance bottlenecks, slow processing times, data quality issues, and increasing infrastructure costs. Without proper optimization, ETL workflows can become inefficient and difficult to scale.

ETL process optimization focuses on improving the speed, reliability, scalability, and efficiency of data pipelines. Optimized ETL systems reduce delays, improve data accuracy, minimize resource consumption, and support faster business insights.

In this guide, we will explore ETL process optimization in detail, including its importance, common challenges, optimization techniques, tools, best practices, and future trends shaping modern data engineering.

What Is ETL Process Optimization?

ETL process optimization refers to improving the performance and efficiency of ETL workflows used for data integration and transformation.

An ETL pipeline typically involves three stages:

Extract

Data is collected from multiple sources such as:

- Databases

- APIs

- CRM systems

- Cloud platforms

- Spreadsheets

- Web applications

- IoT devices

Transform

The extracted data is cleaned, formatted, validated, enriched, and transformed into a structured format suitable for analysis.

Load

The processed data is loaded into a destination system such as:

- Data warehouses

- Data lakes

- Business intelligence systems

- Analytics platforms

ETL process optimization improves how these stages operate by reducing latency, improving scalability, minimizing failures, and maximizing resource efficiency.

Why ETL Process Optimization Is Important

Data-driven organizations rely on fast and reliable information processing. Poorly optimized ETL systems can create serious operational and analytical problems.

Optimizing ETL pipelines provides several important benefits.

Faster Data Processing

Efficient ETL workflows reduce the time required to process large datasets and generate insights.

Improved Scalability

Optimized systems can handle increasing data volumes without major performance degradation.

Better Data Quality

ETL optimization improves consistency, validation, and transformation accuracy.

Reduced Infrastructure Costs

Efficient pipelines consume fewer computing resources and storage capacities.

Enhanced Business Intelligence

Faster and cleaner data enables businesses to make better decisions in real time.

Common Challenges in ETL Process Optimization

Many organizations experience performance and reliability issues when managing large-scale ETL systems.

Understanding these challenges is essential before implementing optimization strategies.

Large Data Volumes

Modern organizations process terabytes or even petabytes of data regularly.

As datasets grow, ETL workflows may become slower and more resource-intensive.

Complex Data Transformations

Heavy transformation logic can create processing bottlenecks and increase execution time.

Complex joins, aggregations, validations, and calculations often impact performance.

Data Quality Problems

Inconsistent, duplicate, or incomplete data can slow ETL pipelines and reduce reporting accuracy.

Poor Resource Allocation

Improper CPU, memory, or storage allocation can negatively affect ETL performance.

Network Latency

Moving data between cloud environments, databases, and distributed systems may introduce delays.

Batch Processing Delays

Traditional batch-based ETL systems often struggle to support real-time analytics requirements.

Best Strategies for ETL Process Optimization

Organizations can significantly improve ETL performance by implementing the right optimization techniques.

Optimize Data Extraction

Efficient extraction reduces unnecessary data movement and processing overhead.

Use Incremental Data Extraction

Instead of extracting all data repeatedly, incremental extraction only processes newly added or modified records.

Benefits include:

- Faster extraction

- Reduced server load

- Lower bandwidth usage

- Improved scalability

Filter Data Early

Apply filters during extraction to avoid transferring unnecessary records.

This minimizes processing time and storage requirements.

Parallel Data Extraction

Extracting data from multiple sources simultaneously improves throughput and reduces total execution time.

Improve Data Transformation Efficiency

The transformation stage is often the most resource-intensive part of ETL workflows.

Simplify Transformation Logic

Reduce unnecessary calculations, transformations, and data manipulation steps whenever possible.

Push Transformations to Databases

Modern databases are optimized for processing large queries efficiently.

Using database-side transformations can reduce ETL engine workload.

Use In-Memory Processing

In-memory data processing improves speed compared to disk-based operations.

Optimize Joins and Queries

Poor query performance is a common ETL bottleneck.

Best practices include:

- Index optimization

- Query tuning

- Partitioning large tables

- Reducing unnecessary joins

Optimize Data Loading

The loading phase also plays a major role in ETL process optimization.

Use Bulk Loading

Bulk loading techniques reduce transaction overhead and improve insertion speed.

Partition Large Tables

Partitioning improves loading efficiency and query performance.

Disable Indexes Temporarily

Disabling indexes during large data loads can significantly improve performance.

Indexes can be rebuilt afterward.

Compress Data

Data compression reduces storage requirements and improves transfer efficiency.

Parallel Processing in ETL Process Optimization

Parallel processing is one of the most effective optimization strategies for modern ETL systems.

Instead of processing tasks sequentially, pipelines can execute multiple operations simultaneously.

Benefits of Parallel Processing

- Faster execution

- Better resource utilization

- Improved scalability

- Reduced latency

Types of Parallelism

Data Parallelism

Large datasets are divided into smaller chunks processed simultaneously.

Task Parallelism

Different ETL tasks run concurrently.

Pipeline Parallelism

Multiple ETL stages operate simultaneously across distributed systems.

ETL Process Optimization Through Automation

Automation improves reliability and reduces manual intervention in ETL workflows.

Workflow Scheduling

Automated scheduling tools help manage ETL jobs efficiently.

Benefits Include

- Reduced human error

- Better timing control

- Improved monitoring

- Consistent execution

Automated Error Handling

Modern ETL systems use automated alerts and recovery mechanisms to minimize downtime.

Metadata Management

Metadata automation improves data lineage tracking and system visibility.

Cloud-Based ETL Process Optimization

Cloud computing has transformed ETL architecture significantly.

Cloud-native ETL systems offer scalability, flexibility, and cost optimization advantages.

Benefits of Cloud ETL Systems

- Elastic scalability

- Pay-as-you-go pricing

- Distributed processing

- Faster deployment

- High availability

Popular Cloud ETL Platforms

AWS Glue

AWS Glue is a serverless ETL service designed for scalable data integration.

Google Cloud Dataflow and ETL Process Optimization

Dataflow supports real-time and batch data processing pipelines.

Azure Data Factory

Azure Data Factory helps organizations create cloud-based data workflows efficiently.

Snowflake

Snowflake supports high-performance cloud data warehousing and transformation operations.

Real-Time ETL Process Optimization

Traditional ETL systems often rely on scheduled batch processing.

However, many businesses now require real-time analytics and faster decision-making.

Benefits of Real-Time ETL

- Immediate insights

- Faster reporting

- Better customer experiences

- Improved operational efficiency

Technologies Supporting Real-Time ETL Process Optimization

Apache Kafka

Kafka enables real-time event streaming and data pipeline management.

Apache Spark

Spark supports distributed real-time and batch data processing.

Flink

Apache Flink is designed for low-latency stream processing.

ETL Process Optimization Best Practices

Following best practices helps organizations maintain reliable and scalable ETL environments.

Monitor Pipeline Performance

Continuous monitoring helps identify bottlenecks and failures quickly.

Important metrics include:

- Processing time

- CPU usage

- Memory consumption

- Error rates

- Data latency

Use Data Validation Rules

Data validation improves consistency and reduces reporting errors.

Implement Logging and Auditing

Detailed logs improve troubleshooting and compliance tracking.

Design for Scalability

ETL architectures should support future data growth and increasing workloads.

Reduce Data Movement

Moving large datasets unnecessarily increases processing costs and latency.

Maintain Proper Documentation

Well-documented pipelines improve collaboration and maintenance efficiency.

ETL Process Optimization Tools

Many tools help organizations optimize ETL workflows effectively.

Informatica

Informatica is one of the most widely used enterprise ETL platforms.

Talend

Talend provides open-source and enterprise data integration solutions.

Apache Airflow

Airflow helps automate and orchestrate ETL workflows.

SSIS

SQL Server Integration Services is commonly used for Microsoft-based ETL environments.

Matillion

Matillion focuses on cloud-native ETL and ELT workflows.

Pentaho

Pentaho supports data integration, analytics, and reporting capabilities.

Difference Between ETL Process Optimization and ELT

Modern data systems increasingly use ELT instead of traditional ETL models.

Understanding the difference is important in optimization discussions.

ETL Model

In ETL:

- Data is extracted

- Data is transformed

- Data is loaded

Transformation occurs before loading.

ELT Model

In ELT:

- Data is extracted

- Data is loaded

- Data is transformed

Transformation occurs inside the target data warehouse.

ELT is popular in cloud environments because modern warehouses can process transformations more efficiently.

AI and Machine Learning in ETL Process Optimization

Artificial intelligence is becoming increasingly important in data engineering.

AI-powered ETL systems can improve automation, anomaly detection, and workflow optimization.

AI Applications in ETL

- Automated schema mapping

- Intelligent error detection

- Predictive performance optimization

- Automated data cleansing

- Smart workload balancing

Machine learning models can also identify performance bottlenecks proactively.

Security Considerations in ETL Process Optimization

Security is a major concern when processing sensitive business data.

Organizations should implement strong security measures throughout ETL pipelines.

Important Security Practices

- Data encryption

- Access controls

- Role-based permissions

- Secure API connections

- Compliance monitoring

- Audit logging

Protecting data integrity and confidentiality is critical for modern ETL systems.

Future Trends in ETL Process Optimization

The future of ETL optimization is closely connected to cloud computing, AI, and real-time analytics.

Several major trends are shaping the industry.

Increased Adoption of ELT

Cloud-native data warehouses continue driving ELT adoption.

Serverless Data Pipelines

Serverless architectures reduce infrastructure management complexity.

AI-Driven Automation

AI-powered systems will increasingly automate optimization and monitoring tasks.

Real-Time Analytics Expansion

Businesses continue demanding faster access to insights and operational intelligence.

Data Fabric Architectures

Modern data architectures aim to improve integration across distributed environments.

Final Thoughts on ETL Process Optimization

ETL process optimization plays a critical role in modern data management and analytics systems. As organizations continue generating massive amounts of data, efficient ETL workflows become essential for scalability, reliability, and business intelligence.

Optimized ETL systems improve performance, reduce operational costs, enhance data quality, and support faster decision-making across organizations.

Whether businesses use traditional ETL pipelines, cloud-native architectures, or real-time streaming systems, optimization strategies such as parallel processing, automation, query tuning, and AI-driven monitoring can significantly improve efficiency.

As technology continues evolving, organizations that invest in scalable and optimized data integration systems will gain stronger competitive advantages in the data-driven digital economy.

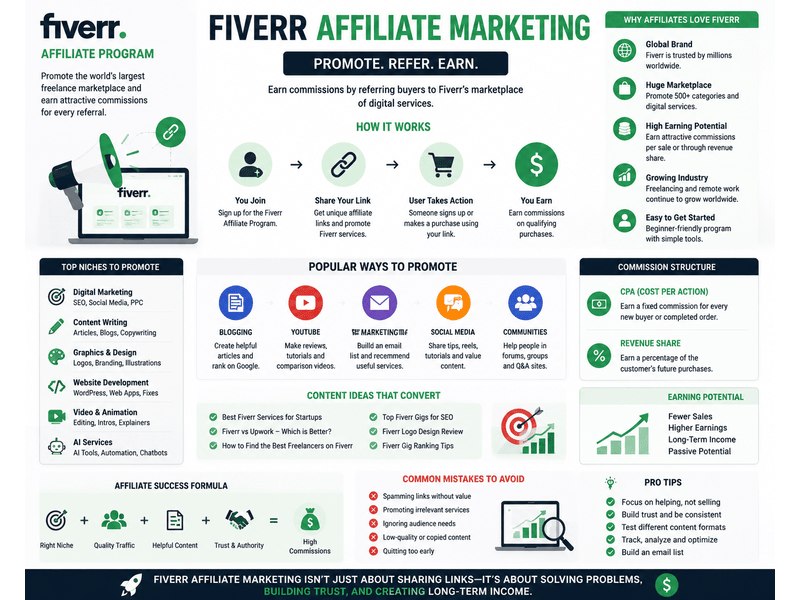

Fiverr Affiliate Program: A Guide to Maximizing Affiliate Earnings

Affiliate marketing continues to grow as one of the most popular online income opportunities in the digital economy. Thousands of…

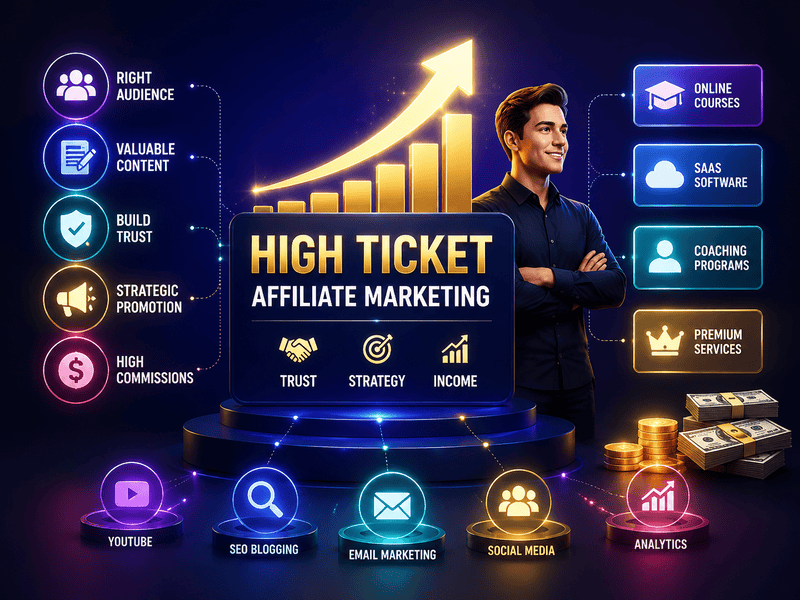

High Ticket Affiliate Marketing: How to Earn Higher Commissions

Affiliate marketing has become one of the most popular online business models in the digital economy. Thousands of creators, bloggers,…

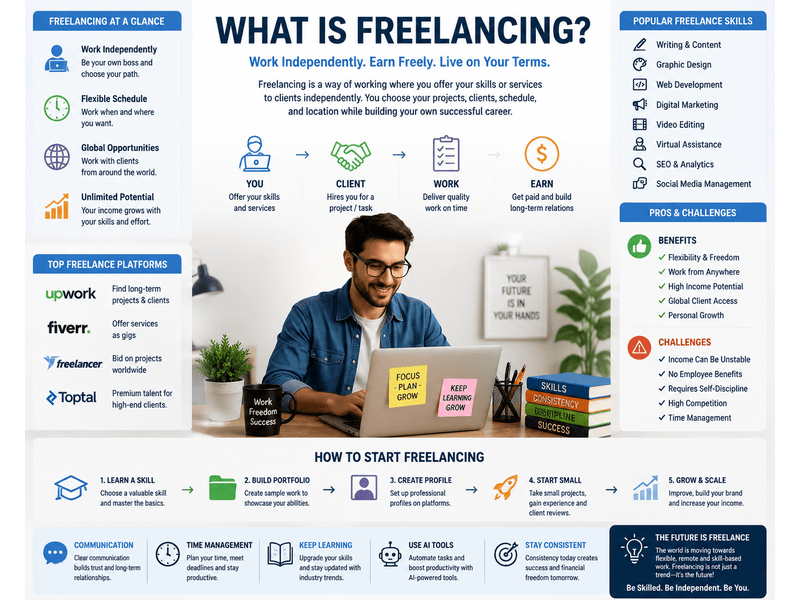

What Is Freelancing: Complete Beginner Guide to Freelance Work

The modern work environment has changed dramatically over the last decade. Traditional office jobs are no longer the only way…

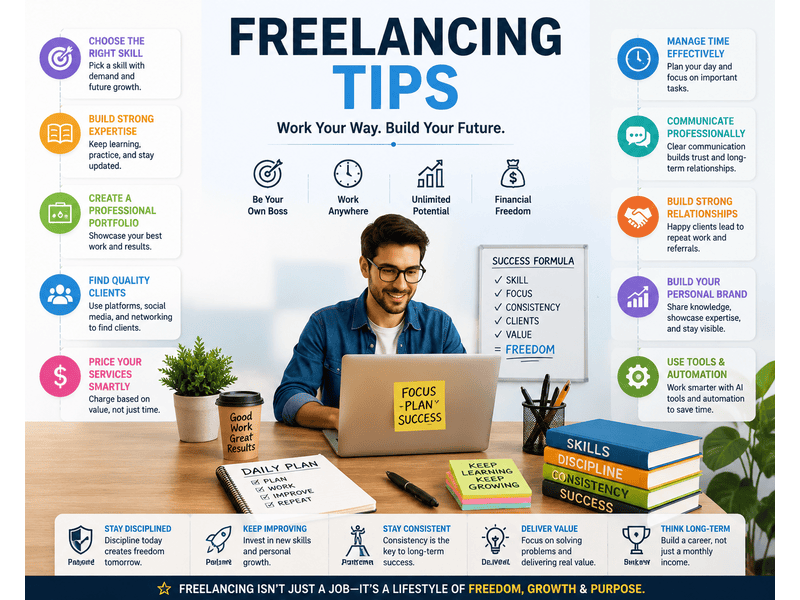

Freelancing Tips: Proven Strategies to Build Freelance Career

Freelancing has become one of the most attractive career choices in the modern digital economy. Millions of professionals worldwide are…

ETL Process Optimization: Best Strategies to Improve Data Performance and

Modern businesses generate enormous amounts of data every single day. From customer interactions and sales records to website analytics and…

Marketing Tools for Small Business: Best Platforms to Grow Faster.

Running a small business in today’s digital world is more competitive than ever. Whether you own an online store, local…

No responses yet